Conditional on Yourself

Conditioning shows up everywhere in probability theory and statistics. It is, perhaps, one of the most powerful tools from probability theory, since in science we're often interested in how two things are related. As a simple example, linear regression is, in essence, a question of a conditional expectation.

Typically, when we see conditioning, we condition one random variable on different random variable. That is, we might have two random variables \(X\) and \(Y\), which really constitute a single object1 \((X, Y)\) with a single distribution function \(F_{(X, Y)}(x, y)\). Technically, the distribution function \(F_{(X, Y)}\) tells us everything we need to know. We might then be interested in what values \(Y\) takes, given we see particular values of \(X\). That is, we're interested in the conditional distribution of a new object, \(Y | X = x.\) We can handle this by defining a conditional distribution function \(F_{Y | X}\) using the usual definitions from probability theory, \[ F_{Y | X} (y | x) = P(Y \leq y | X = x) = \frac{P(Y \leq y, X = x)}{P(X = x)}.\] You might be annoyed by the equality \(X = x\), since for a continuous random variable \(X\) the probability of this occurrence must be zero. But since we have the equality in both the numerator and the denominator, 'there is hope,' to quote two of my Greek professors. You can give this definition meaning by applying the Mean Value Theorem2.

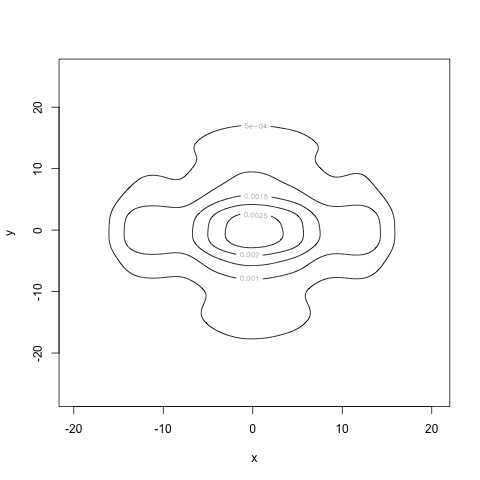

All of this to discuss something else. Conditioning a random variable on itself. This came up in two unrelated places: boxcar kernel smoothing and mean residual time. The basic idea is simple: we know something is true about a random variable. For example, it took on a value in some range. But that's all we know. What can we now say about the distribution of the random variable?

To be more concrete, suppose we have a continuous random variable \(X\) whose range is the entire real line. We know that the random variable lies between zero and four, but nothing else. What can we say about the distribution of the random variable now?

Intuitively3, we could think of this as collecting a bunch of random draws \(X_{1}, \ldots, X_{n}\), looking at those that fall in the interval \((0, 4)\) (this is the conditioning4 part), and then asking how those draws are distributed. Taking the limit to infinitely many draws, we recover what we're seeking.

We can write down the density function without much work. We're interested in the distribution of \(X | X \in (0, 4)\). Pretending like we just have two random variables, we write down \[f(x | X \in (0, 4)) = \frac{f(x, X \in (0, 4))}{P(X \in (0, 4))} = \frac{f(x) 1_{(0,4)}(x)}{\int_{(0, 4)} f(x) \, dx} = c f(x) 1_{(0,4)}(x)\] where \(1_{(0, 4)}(x)\) is the handy indicator function, taking the value 1 when \(x \in (0, 4)\) and 0 otherwise. Deconstructing this, it makes perfect sense. We're certainly restricted to the interval \((0, 4)\), which the indicator function takes care up, but we also have to integrate to 1, which the normalizing constant \(c = \frac{1}{\int_{(0, 4)} f(x) \, dx}\) takes care of. Basically, the density in the region, conditioned on the fact that we're in the region, is the same shape as the unconditional probability of being in the region, but scaled up.

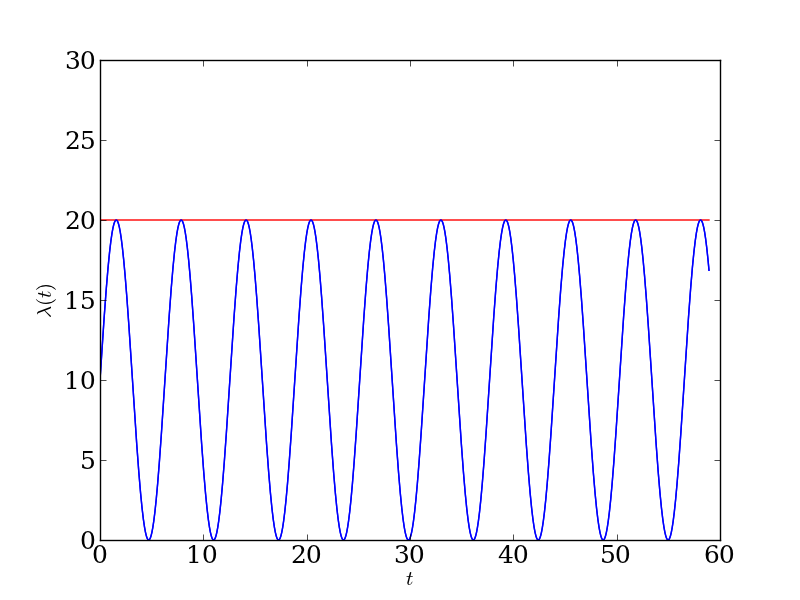

To be super concrete, suppose \(X \sim N(0, 1)\). Then we can both estimate the conditional density (using histograms) and compute it analytically. In which case we get a figure like this:

Blue is the original density, red is the conditional density, and the histograms are, well, self-explanatory.

This probably seems face-palm obvious, but for some reason I felt the need to work it out.

This single object is called, unimaginatively, a random vector. I'm not sure why this vector idea isn't introduced earlier on in intermediate probability theory courses. One possible reason: showing that a random vector is a reasonable probabilistic object involves hairy measure theoretic arguments about showing that the Borel set of a product space is the product space of the Borel sets. If that doesn't make any sense, don't worry about it.↩

By the standards of measure theoretic probability, I'm being very imprecise. If you want to get a flavor for how an analyst would deal with these things, consider this Wikipedia blurb.↩

One of the nice things about the frequency interpretation of probability theory is that it does lend itself to intuition in these sorts of situations.↩

When I first learned probability theory, I frequently confused conditioning and and-ing (for lack of a better term). That is, the difference between the probability that this and that occur, versus the probability that this occurs given that occurs. For the first, you count up all of the times that both things occur. For the second, you first see how many times the second thing occurs, and of those count up how many times the first thing occurs. These are obvious when you write down their definitions. But the intuition takes some work.↩